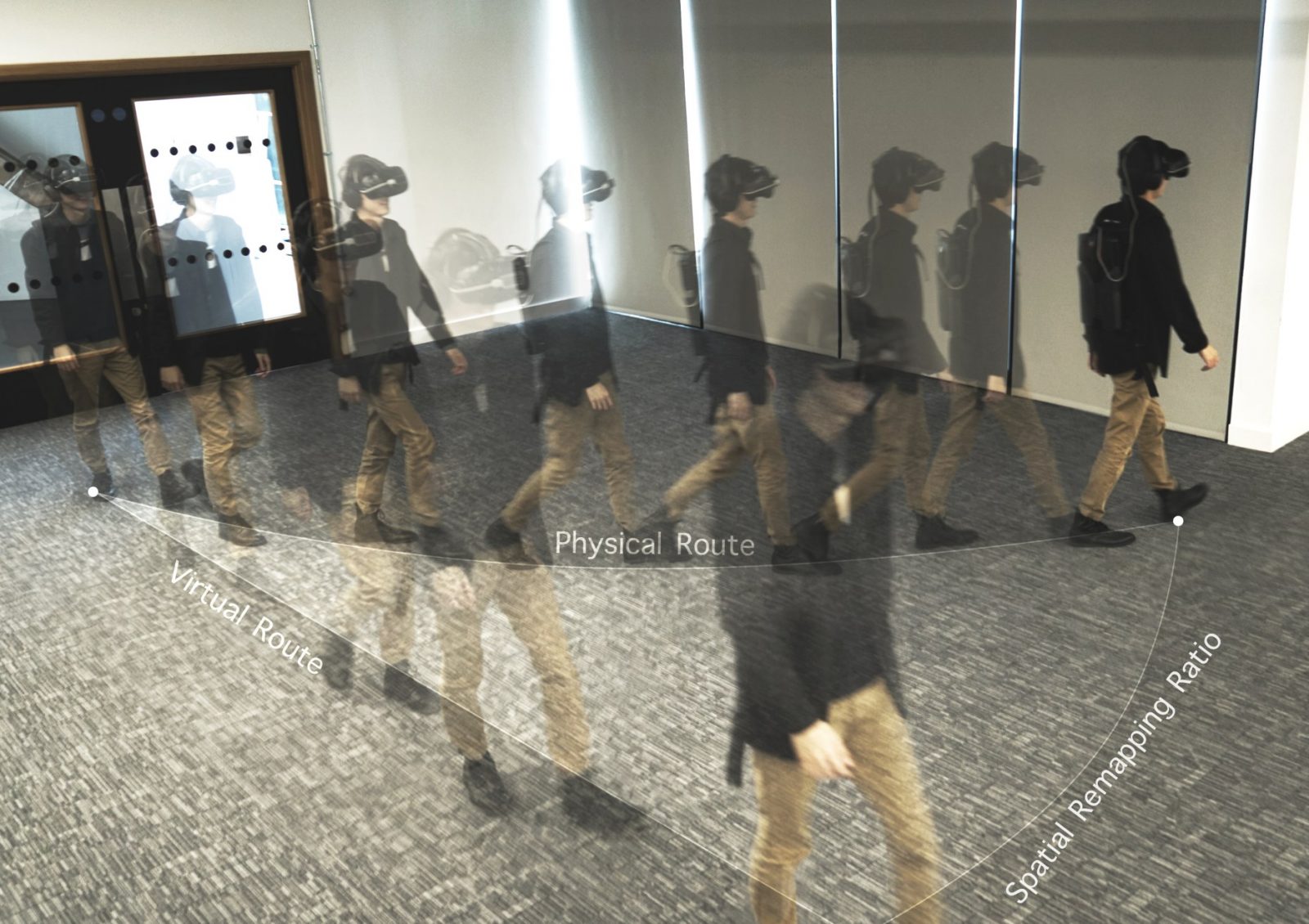

As a solution to this, we have developed our Mixed Reality Redirected Walking Algorithm. It remaps the physical space to a larger virtual space by redirecting the user’s walking path. This solution not only provides the rich content of virtual experience, but also retains the user’s walking habit in physical space. With this, we have achieved the purpose of mixing virtual and physical space.

Additionally, this kind of mixed reality space also has the following characteristics. On the one hand, users can achieve real walking in these spaces and are able to choose freely where they want to go. Currently, our Mixed Reality Redirected Walking Algorithm can be used to map from 4m * 8m physical space to infinite virtual space.

On the other hand, this mixed reality space can also provide true tactile feedback.

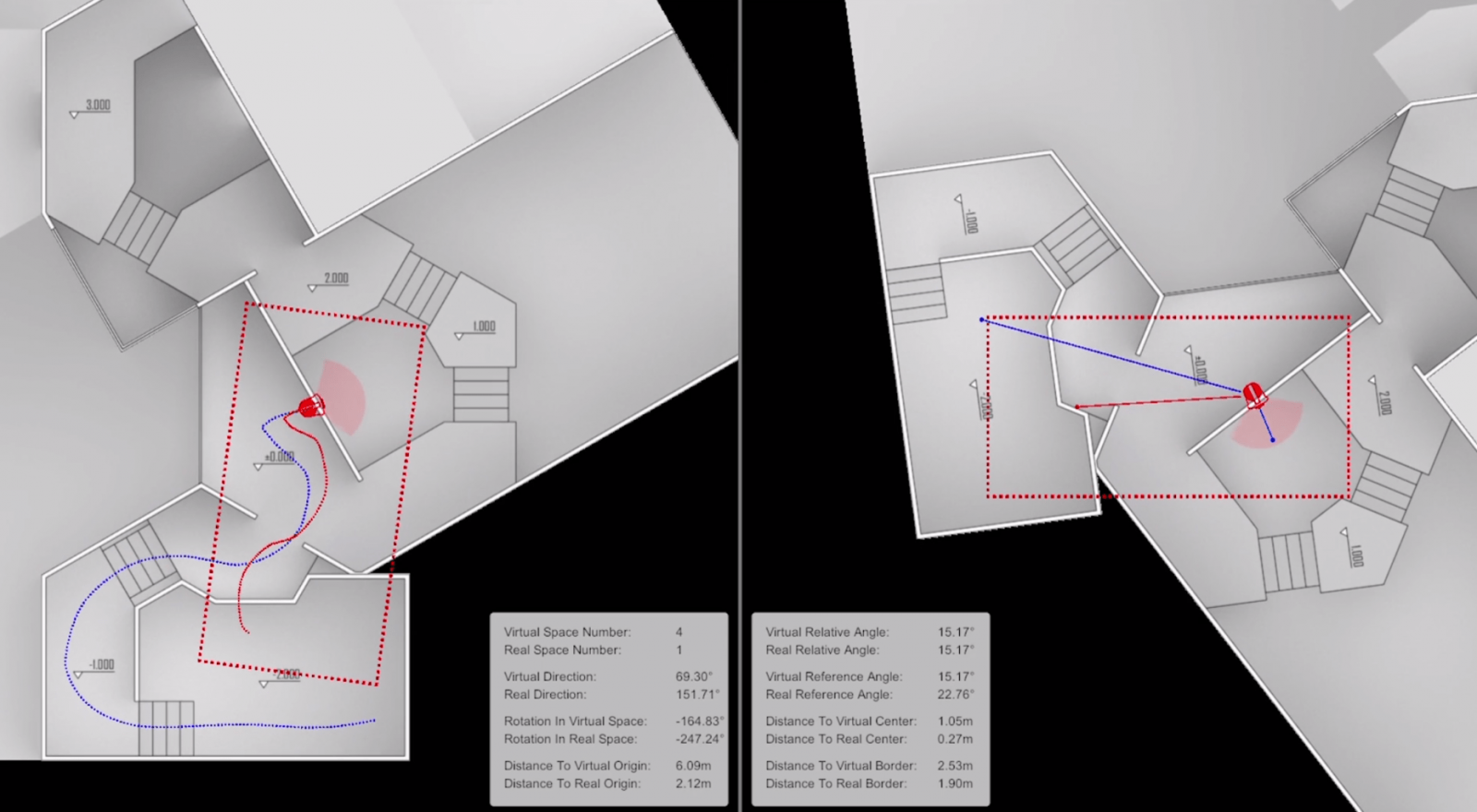

Specifically speaking, we can implement this mapping by following these steps. First, the physical space is scanned to determine the size of the space and spatial boundaries,. Then the physical space is divided so that it becomes two (or more) different physical spatial units to accommodate the size requirements of the algorithm for spatial mapping.

Then, for each divided physical space unit, the polar coordinate system is established with the center point of the space as the origin. According to the coordinates of each point in the spatial unit, the designed deflection value between the physical space and the virtual space is calculated and applied. Then we use the accumulation of these deflection values to map the limited physical space unit to a larger virtual space unit. The magnified virtual space unit’s shape and magnification ratio can be adjusted as needed. Through a specific switch between the two physical space units, users can walk seamlessly between infinite virtual space units. That is how we make the limited physical space being mapped to infinite virtual space.

Next, based on the design of the target virtual space, we can choose different mapping methods. In this way we can make sure that we can keep the freedom of designing virtual spatial experience while still being able to establish mapping between virtual space and physical space.

Finally, this pre-designed mapping method will calculate the real-time virtual location and orientation of the users when they are walking in the physical space. Then the computer will render corresponding visual and auditory content. This ensures the synchronization of physical and virtual space and provides mixed reality spatial experience.

This new way of walking greatly optimizes and enhances the mixed reality experience. It provides the users with a more natural interaction in mixed reality, will not sacrifice any sense of experience and does not need additional hardware support. This can greatly expand the application prospects of mixed reality, making it more possible to enter user’s daily life.